Kafka performance tuning is a complete black box, there is no one size fits all. From our experience of delivering over 30+ Enterprise projects in the last 18 months, we will discuss some best practices for monitoring and improving the performance of Kafka. Kafka is a distributed streaming platform that is widely used for building real-time data pipelines and streaming applications. It is important to monitor and optimise the performance of Kafka to ensure that it can handle the high throughput and low latency requirements of modern data-driven applications.

One of the key aspects of improving Kafka performance is to optimise the topic and partition configuration. More partitions can generally improve performance, but there is a point where adding too many partitions can degrade performance. It is important to find the right balance and monitor the performance curve to ensure optimal performance.

For consumers, there are two important factors to consider: parallelism and commit batching. Parallelism refers to creating groups of consumers that can read from multiple threads. Increasing the number of consumers within these groups can help keep up with the data being sent from producers and brokers. Commit batching involves committing the consumed messages in batches rather than individually, which can improve performance by reducing the overhead of committing each message separately. Think about which might be more beneficial for your use-case.

For producers, there are several settings that can be tuned to optimise performance. One important setting is the acknowledgment configuration, which determines how many replicas of a message must acknowledge its receipt before the producer considers it successfully sent. This setting can be adjusted to balance between data integrity and speed, first check the defaults as its lightly all producers in your environment have the same acknowledgement.

Another setting to consider is the buffer size and batch size. The buffer size determines how much data can be buffered in memory before it is sent to the broker, while the batch size determines the maximum size of each batch of messages sent to the broker. Adjusting these settings can help optimise throughput and reduce network overhead.

When tuning Kafka, it is important to consider the trade-offs between different performance metrics, such as throughput, latency, data integrity, and cost. For example, sacrificing data integrity for speed may be acceptable in certain applications, while others may prioritise data integrity over speed.

Additionally, the cost and management implications of scaling Kafka should be taken into account. Adding more brokers and partitions can improve performance, but it also increases the management complexity and operational overhead costs. It is important to balance the need for performance with the available resources and budget.

To effectively monitor and manage a Kafka environment, automation is key. As the system scales, manual monitoring and management become impractical across such a vast amount of infrastructure. Automation allows for proactive monitoring, alerting, and performance optimization.

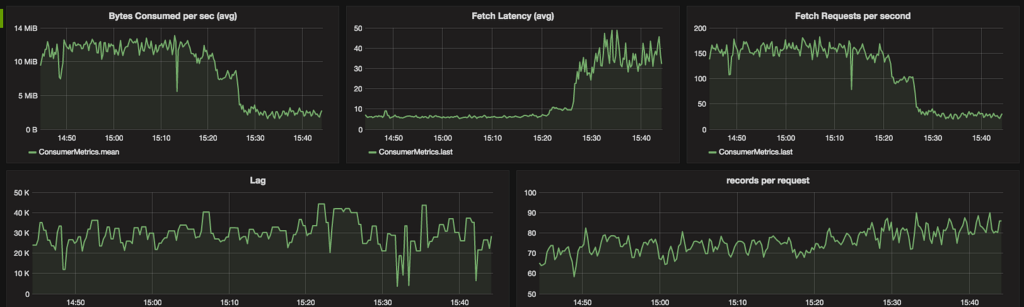

Monitoring should cover both the Kafka components and the underlying hardware. Key metrics to monitor include the number of active controllers, under-replicated partitions, offline partitions, and consumer lag. Additionally, metrics such as logs flush latency and network interface performance should be monitored to identify potential bottlenecks.

Open Source projects, such as Prometheus and Grafana, can provide comprehensive monitoring and performance data for Kafka environments. These tools automatically scrape metrics like throughput, latency, data integrity, and capacity, allowing users to make informed decisions about architectural changes and operational actions. By creating monitoring and alerting dashboards, blind spots can be eliminated, and surprises can be minimised. With the right performance data readily available, troubleshooting and resolving issues becomes faster and more efficient.

Monitoring and optimising the performance of Kafka is crucial for ensuring the smooth operation of data pipelines and streaming applications. By tuning at the topic and partition level, optimising for consumers and producers, and considering trade-offs and resource constraints, Kafka environments can be fine-tuned for optimal performance.

Using the right automation and comprehensive monitoring tools play a vital role in managing and maintaining Kafka environments at scale. With the right tools and practices in place, organisations can leverage the full potential of Kafka and ensure the reliability and efficiency of their data-driven applications.

As always there is no silver bullet in performance tuning, always consult an expert if you are unsure. Contact us!

Fore more content:

How to take your Kafka projects to the next level with a Confluent preferred partner

Event driven Architecture: A Simple Guide

Watch Our Kafka Summit Talk: Offering Kafka as a Service in Your Organisation

Have a conversation with a Kafka expert to discover how we help your adopt of Apache Kafka in your business.

Contact Us